The Coalition for Health AI this past Friday unveiled news plans for how it would certify independent artificial intelligence assurance labs.

The draft frameworks come as the group – comprising health system heavy-hitters such as Mayo Clinic, Penn Medicine and Stanford, along with Amazon, Google, Microsoft and other Big Tech giants – set a timeline for its aim to standardize the output of testing labs by grading AI and ML models with so-called CHAI Model Cards – which the group likens to ingredient and nutrition labels on food products.

CHAI leaders say the certification rubric and model card designs can be expected – after incorporating stakeholder feedback from members, partners and the public – by the end of April 2025.

WHY IT MATTERS

Created with the ANSI National Accreditation Board and several emerging quality assurance labs using ISO 17025 – it’s the predominant standard for testing and calibration laboratories worldwide – thedraft CHAI certification program framework requires, among other things, mandatory disclosure of conflicts of interest between assurance labs and model developers, and the protection of data and intellectual property. (That standard was also used for ONC’s Electronic Health Record certification program.)

The testing and cert program also incorporates data quality and integrity requirements derived from FDA’s guidance on the use of high-quality real-world data, CHAI officials note, as well as testing and evaluation metrics sourced from the coalition’s various working groups – all in alignment with the National Academy of Medicine’s AI Code of Conduct.

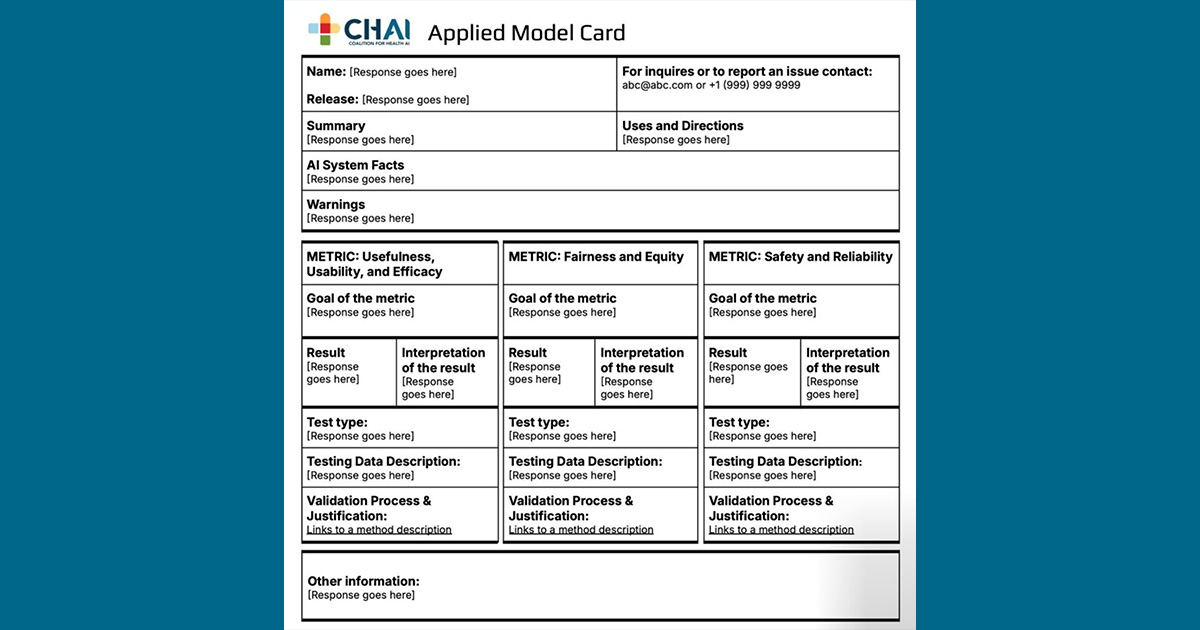

The draft model card – designed by a workgroup comprising experts from regional health systems, electronic health record vendors, medical device makers and others – offers a standardized template designed to show a degree of transparency about algorithms, presenting certain baseline information to help end users evaluate the performance and safety of AI tools.

That information includes the identity of the AI developer, the model’s intended uses, targeted patient populations and more. Other performance metrics include security and compliance accreditations and maintenance requirements. The cards also have information about known risks and out-of-scope uses, biases and other ethical considerations.

CHAI says it will continue to engage with healthcare stakeholders – including patient advocates, small and rural health systems and tech startups for additional guidance on model card development. The group is also seeking feedback via its assurance lab and model card forms.

“The rapid evolution of AI in healthcare has created a landscape that can feel unregulated and fragmented,” said CHAI applied model card workgroup member Demetri Giannikopoulous, chief transformation officer at Aidoc, which helped lead design and development of the cards, in a statement.

“By establishing a common framework that aligns with federal regulations, we are moving beyond theoretical discussions and building the foundation for scalable, reliable, and ethical AI solutions that can be adopted across the healthcare ecosystem.”

THE LARGER TREND

Since its founding in 2021, CHAI has been working, along with others who have similar goals, to be the go-to source for trusted AI – whether that refers to patient safety, privacy and security, fairness and equity, model transparency or utility and efficacy.

As the technology advances and proliferates, the coalition is growing too – convening an array of stakeholders from hospitals and health systems, Big Tech, startups, government agencies and patient advocates. privacy and security, fairness, transparency, usefulness, and safety of AI algorithms.

ON THE RECORD

“This has been a pivotal year for CHAI on our journey to help enable trusted independent assurance of AI solutions with local monitoring and validation,” said Anderson in a statement. “We are thrilled with the progress of our CHAI workgroups who represent a diversity of perspectives and expertise across the health ecosystem.

“These frameworks for certification and basic transparency are building blocks of responsible health AI. They will help to streamline the path for AI innovation, build trust with patients and clinicians, and position health systems and solution innovators ahead of emerging state and federal regulations.”

Mike Miliard is executive editor of Healthcare IT News

Email the writer: [email protected]

Healthcare IT News is a HIMSS publication.