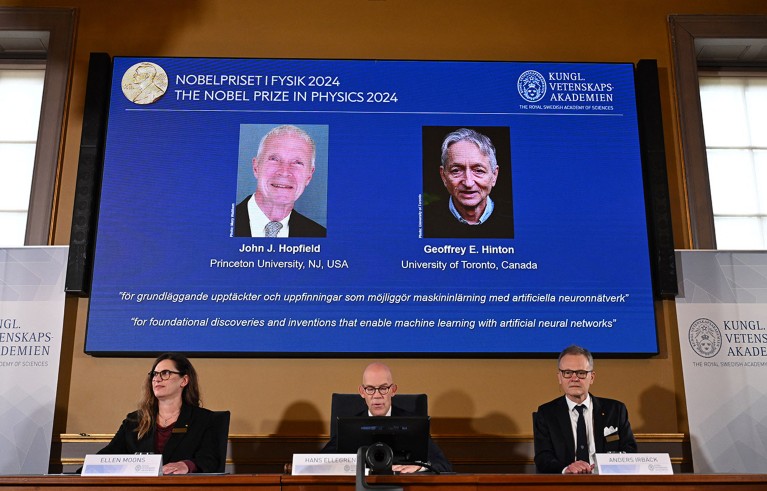

The winners were announced by the Royal Swedish Academy of Sciences in Stockholm.Credit: Jonathan Nackstrand/AFP via Getty

Two researchers who developed tools for understanding the neural networks that underpin today’s boom in artificial intelligence (AI) have won the 2024 Nobel Prize in Physics.

John Hopfield at Princeton University in New Jersey, and Geoffrey Hinton at the University of Toronto, Canada, share the 11-million Swedish kronor (US$1 million) prize, announced by the Royal Swedish Academy of Sciences in Stockholm on 8 October.

Both used tools from physics to come up with methods that power artificial neural networks, which exploit brain-inspired, layered structures to learn abstract concepts. Their discoveries “form the building blocks of machine learning, that can aid humans in making faster and more-reliable decisions”, said Nobel physics committee chair Ellen Moons, a physicist at Karlstad University, Sweden, during the prize announcement. “Artificial neural networks have been used to advance research across physics topics as diverse as particle physics, material science and astrophysics.”

Machine memory

In 1982, Hopfield, a theoretical biologist with a background in physics, came up with a network that described connections between virtual neurons as physical forces1. By storing patterns as a low-energy state of the network, the system was able to re-create these patterns when prompted with something similar. It became known as associative memory, because the way in which it ‘recalls’ things is similar to the brain trying to remember a word or concept on the basis of related information.

Hinton, a computer scientist, used principles from statistical physics, which collectively describes systems that have too many parts to track individually, to further develop the ‘Hopfield network’. By building probabilities into a layered version of the network, he created a tool that could recognize and classify images, or generate new examples of the type that it was trained on2.

Computer science: The learning machines

These processes differed from earlier kinds of computation, in that the networks were able to learn from examples, including from complex data. This would have been challenging for conventional software reliant on step-by-step calculations.

The networks are “grossly idealized models that are as different from real biological neural networks as apples are from planets”, Hinton wrote in Nature Neuroscience in 2000. But they proved useful and have been built on widely. Neural networks that mimic human learning form the basis of many state-of-the-art AI tools, from large language models to machine-learning algorithms capable of analysing large swathes of data, including the protein-structure-prediction model AlphaFold.

Speaking by telephone during the physics-prize announcement, Hinton said that learning he had won the Nobel was “a bolt from the blue”. “I’m flabbergasted, I had no idea this would happen,” he said. He added that advances in machine learning “will have a huge influence, it will be comparable with the industrial revolution. But instead of exceeding people in physical strength, it’s going to exceed people in intellectual ability”.

In recent years, Hinton has become one of the loudest voices calling for safeguards to be placed around AI. He says he became convinced last year that digital computation had become better than the human brain, thanks to its ability to share learning from multiple copies of an algorithm, running in parallel. “It made me think [that these systems are] going to become more intelligent than us sooner than I thought,” he said on 31 May, speaking virtually to the AI for Good Global Summit in Geneva, Switzerland. “Up until that point, I’d spent 50 years thinking that if we could only make it more like the brain, it will be better.”

Motivated by physics

Hinton also won the A. M. Turing Award in 2018 — sometimes described as the ‘Nobel of computer science’. Hopfield, too, has won several other prestigious physics awards, including the 2001 Dirac Medal.

Hopfield’s “motivation was really physics, and he invented this model of physics to understand certain phases of matter”, says Karl Jansen, a physicist at the German Electron Synchrotron (DESY) in Zeuthen, who describes the work as “groundbreaking”. After decades of development, neural networks have become an important tool in analyzing data from physics experiments and in understanding the types of transitions between phases that Hopfield had set out to study, Jansen adds.

How to win a Nobel prize: what kind of scientist scoops medals?

Lenka Zdeborová, a specialist in the statistical physics of computation at the Swiss Federal Institute of Technology in Lausanne (EPFL), says she was pleasantly surprised that the Nobel Committee recognized the importance of ideas from physics for understanding complex systems. “This is a very generic idea, whether it’s molecules or people in society.”

In the past five years, the Abel Prize and Fields Medals have also celebrated the cross-fertilization between mathematics, physics and computer science, particularly contributions to statistical physics.

Both Hopfield and Hinton “have brought incredibly important ideas from physics into AI”, says Yoshua Bengio, a computer scientist who shared the 2018 Turing Award with Hinton and fellow neural-network pioneer Yann LeCun. Hinton’s seminal work and infectious enthusiasm made him a great role model for Bengio and other early proponents of neural networks. “It inspired me incredibly when I was just a university student,” says Bengio, who is scientific director of Mila — Quebec Artificial Intelligence Institute in Montreal, Canada. For decades, many computer scientists regarded neural-network research as fruitless, says Bengio, but there was a turning point in 2012, when Hinton and others used the technology to win a major image-recognition competition.

Brain-model benefits

Biology has also benefited from these artificial models of the brain. May-Britt Moser, a neuroscientist at the Norwegian University of Science and Technology in Trondheim and a winner of the 2014 Nobel Prize in Physiology or Medicine, says she was “so happy” when she saw this year’s Nobel physics winners being announced. Versions of Hopfield’s network models have been useful to neuroscientists, she says, for investigating how neurons work together in memory and navigation. His model, which describes memories as low points of a surface, helps researchers to visualize how certain thoughts or anxieties can get fixed and retrieved in the brain, she adds. “I love to use this as a metaphor to talk to people when they are stuck.”

Ten computer codes that transformed science

Today, neuroscience relies on network theories and machine-learning tools, which stem from Hopfield’s and Hinton’s work, to understand and process data on thousands of cells simultaneously, says Moser. “It’s like a fuel for understanding things that we couldn’t even dream of when we started in this field.”

“The use of machine-learning tools is having immeasurable impact on data analysis and our potential understanding of how brain circuits may compute,” says Eve Marder, a neuroscientist at Brandeis University in Waltham, Massachusetts. “But these impacts are dwarfed by the many impacts that machine learning and artificial intelligence are having in every aspect of our daily lives.”